AI's Ceiling: Stateless, Brittle, Amnesic

I spend ₹60,000 a year on AI subscriptions.

The painful part isn’t the bill. It’s re-teaching the same business context to my “smart” assistant every 48 hours.

Last quarter, my operations lead handled a client escalation that could’ve cost us a quarter’s revenue. She remembered every nuance: pricing sensitivity, the procurement manager’s allergy to jargon, the hidden politics between engineering and finance. That training paid dividends for six months.

That same week, I asked Claude to draft a follow-up email to the same client. It nailed the tone. Two days later, I asked it to write another message to the same client. Blank slate. No memory. Nothing.

I wasn’t paying for intelligence. I was paying for a brilliant pattern-matcher with severe amnesia.

And that amnesia isn’t a bug. It’s woven into the architecture.

Limit 1: Statelessness (Amnesia by Design)

Modern AI has no persistent internal model of your clients, constraints, or reality. Each prompt is a fresh hire. Each session is training from scratch.

The System 1 Trap

LLMs are System 1 engines. Fast, intuitive, pattern-driven. But they cannot perform System 2 tasks reliably:

- Multi-step arithmetic

- Causal reasoning

- Planning from first principles

- Maintaining consistency across a 100-page document

I once asked GPT-4 to build a financial model for a business idea. The output looked pristine: clean formatting, industry metrics, plausible growth curves. But the logic connecting churn to CAC was broken—statistically plausible but causally nonsense.

It took me three hours to spot the error. A less technical founder would’ve taken that model to an investor and torched their credibility.

Limit 2: Hallucination (Confabulation Is the Feature)

Last year, Claude hallucinated a pricing strategy for a client. It referenced “market benchmarks” and “competitive positioning.” Every data point was fabricated.

I had to send a correction email starting with: “I apologize for the misinformation…”

The Statistical Completion Engine

Every LLM output is a probability calculation: “Given this input, what sequence is most likely to follow?”

When confident (common patterns), it’s accurate. When uncertain (niche topics), it doesn’t say “I don’t know.” It fills the gap with the most statistically plausible fabrication.

No World Model = No Sanity Check

Humans sanity-check automatically. If I said the sun sets in the north, your mental model flags the error.

LLMs have no such model. They don’t maintain object permanence. They’re the brilliant intern who answers every question with absolute confidence, even when guessing.

The problem? You can’t see when they’re guessing.

Limit 3: Brittle Reasoning (The Dependency Trap)

I once used Claude to plan a multi-stage product launch: content calendar, email sequences, webinar logistics, sales follow-up.

The first draft looked impressive. Then I noticed the timeline had us sending launch emails two weeks before the product was built. The model missed a dependency because it wasn’t tracking dependencies—it was generating a plausible-sounding sequence.

The Entity Tracking Problem

LLMs track tokens, not entities.

- Ask Sora to generate a gymnast video, and limbs multiply mid-flip.

- Ask a text model to write a 50-step process, and by step 30 it’s forgotten step 5.

- Ask it to handle a client with three stakeholders, and it confuses who wants what.

This is why:

- Legal contracts need human review (entity tracking across clauses)

- Financial models fail silently (causal dependencies drift)

- Project plans look right but aren’t (sequences are mimicked, not reasoned)

The Path Forward: Neurosymbolic AI

The consensus among researchers is clear: pure LLMs will never reach true reasoning.

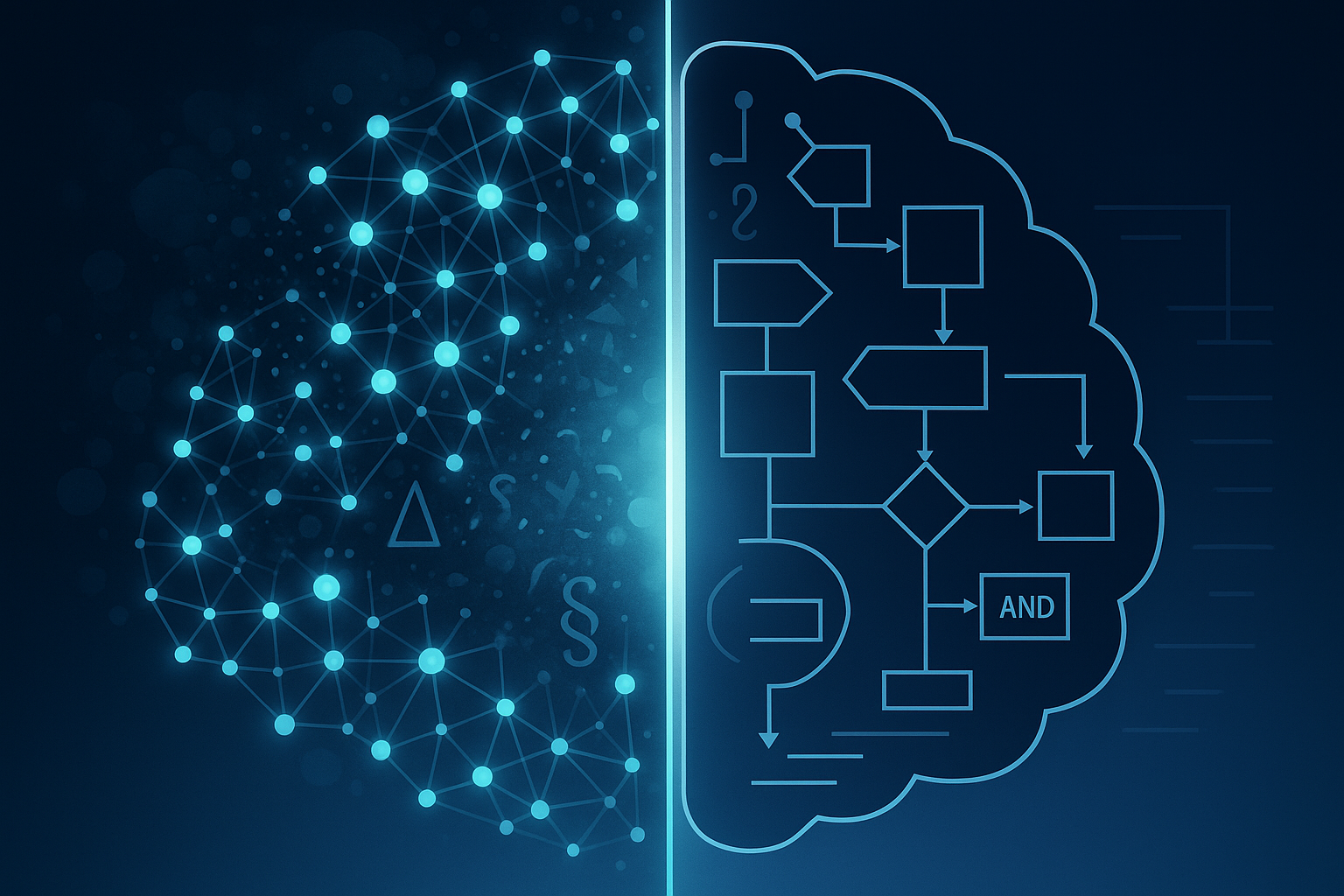

The path forward is neurosymbolic AI: a hybrid of neural networks (System 1) and symbolic logic (System 2).

- Neural Layer: Fast, pattern-driven (handles language, generates ideas)

- Symbolic Layer: Slow, rule-based, validated (checks logic, enforces constraints, maintains consistency)

Proof It Works: DeepMind’s AlphaFold solved protein folding because it was hybrid. It used neural nets for pattern recognition but added explicit geometric constraints and evolutionary rules.

That’s the difference between an LLM writing a plausible scientific paper and a system that can discover a new drug.

The Playbook for Building with AI

Understanding these limits isn’t academic—it’s the difference between using AI as a force multiplier and using it as an expensive liability.

Treat AI as a Brilliant Intern, Not a Senior Strategist Your intern is fast, tireless, writes beautiful prose. But you’d never let them sign a contract, set pricing, or plan a launch solo.

Build Context Systems Because Amnesia Is Permanent Maintain a living context doc with client preferences, product constraints, voice guidelines. Attach it to every prompt. Update it weekly.

Build a Simple Validation Layer Wherever AI touches critical workflows, add lightweight guardrails. Always review customer-facing content personally.

Design Workflows That Don’t Require True Reasoning If your workflow requires causal chains or object tracking, pure LLMs will break. You handle the reasoning. AI handles pattern generation.

Keep a Human in the Loop for Everything That Matters Every AI output that affects revenue, customers, or brand gets human eyes. No exceptions.

The Real Limit Isn’t Intelligence—It’s Architecture

I still spend ₹60,000 a year on AI. But now I know exactly what I’m buying: the world’s fastest pattern-matcher, not a reasoning partner.

The ceiling is real. But it’s not a wall; it’s a boundary. On one side: fluency, creativity, speed. On the other: logic, persistence, reasoning.

Your job as a founder is to know which side you’re on and build accordingly.

Your takeaway: Those who win won’t be the ones with the biggest AI budgets. They’ll be the ones who understand the architecture, respect the boundaries, and build systems that turn statelessness into a disciplined workflow.